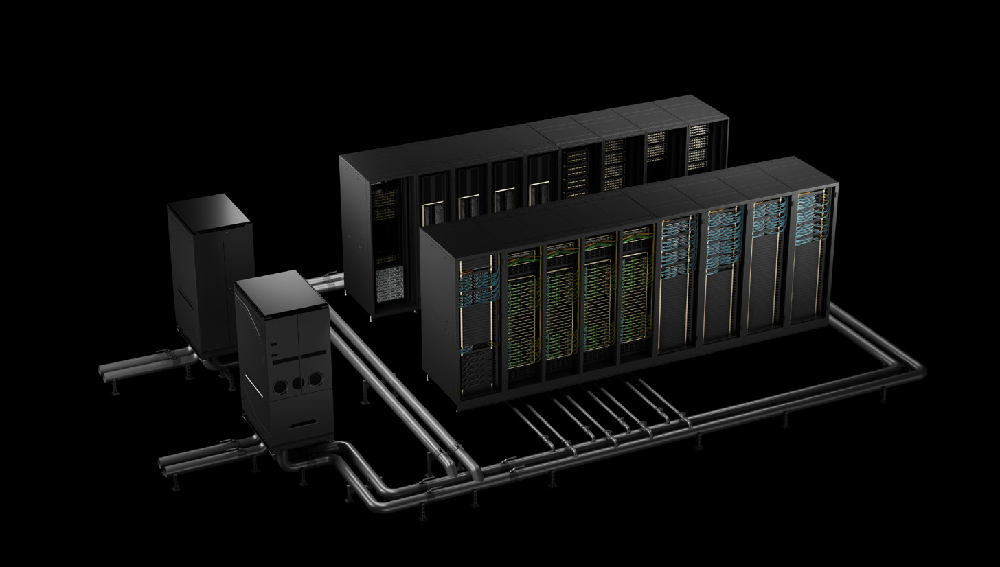

Liquid Cooled Rack-Scale: 72 NVIDIA B200 GPUs, 36 Grace CPUs

The Liquid Cooled Rack-Scale Solution with 72 NVIDIA B200 GPUs and 36 Grace CPUs is a game-changer.

It provides superior performance for AI and high-performance computing tasks, acting as both a high-performance and AI HPC server to handle AI HPC workloads while delivering a high performance server experience for enterprise demands.

This solution is made for data centers that need powerful GPU servers. It works as both a GPU server and a liquid-cooled AI server.

It excels in AI and deep learning applications as a gpu server for training and inference, providing robust processing power.

Liquid cooling technology enhances thermal management and data center cooling, ensuring efficient operation and longevity. This feature is crucial for maintaining optimal performance in demanding environments.

The solution supports versatile storage configurations, including options that align with a versatile storage server or rackmount storage server strategy. It integrates seamlessly with existing infrastructure, reducing deployment complexity and can be paired with a hot swap storage server tier when appropriate.

Ideal for IT professionals and data center managers, this solution represents the future of high-performance computing and enterprise reliability server design. It combines cutting-edge technology with practical applications.

Overview of the Liquid Cooled Rack-Scale Solution

The Liquid Cooled Rack-Scale Solution offers unparalleled computing power. It combines 72 NVIDIA B200 GPUs with 36 Grace CPUs. It is NVIDIA Blackwell compatible, aligning with next-generation GPU platform standards. This integration provides exceptional processing capabilities tailored for demanding workloads.

Liquid cooling technology is a standout feature. It enhances cooling efficiency, which is essential for high-density server environments. This method reduces thermal strain, thus improving performance and reliability.

This solution is optimized for AI, deep learning, and high-performance computing (HPC) workloads as an hpc server and ai hpc server. Its architecture supports diverse applications across data centers. The integration of advanced hardware ensures it meets modern computational demands for scalable enterprise server initiatives and scalable performance server deployments.

Key components of this solution include:

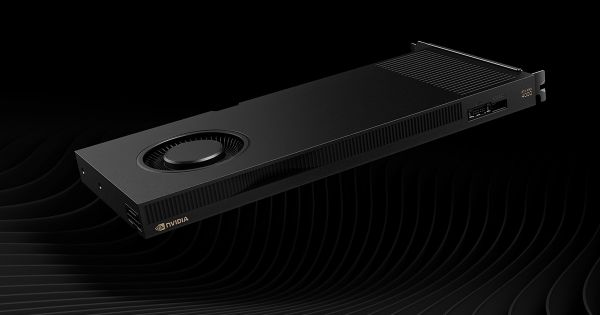

- 72 NVIDIA B200 GPUs for superior parallel processing.

- 36 Grace CPUs offering robust computing power.

- Advanced liquid cooling for effective thermal management.

This powerful combination enhances operational efficiency, making it ideal for next-gen data centers and scalable performance server deployments.

Key Hardware: 72 NVIDIA B200 GPUs and 36 Grace CPUs

The 72 NVIDIA B200 GPUs are at the heart of this solution. They offer tremendous parallel processing power, crucial for AI and HPC tasks. These GPUs ensure that computational tasks are handled efficiently and swiftly.

Complementing the GPUs are 36 Grace CPUs. Known for their high performance, these CPUs drive complex computing operations. Their architecture is designed to handle large datasets and intricate calculations effortlessly.

Together, the NVIDIA GPUs and Grace CPUs create a powerful synergy. This combination ensures optimal performance across a variety of workloads. From data analytics to AI training, the hardware excels in demanding environments.

For organizations standardizing on HGX platforms, companion nodes such as an NVIDIA HGX B300 server (hgx b300 server) can complement this rack-scale design for mixed-cluster strategies.

Mixed environments can also incorporate dual amd epyc server and intel xeon server nodes, alongside single cpu server options, to match specific application requirements. Organizations may also deploy an amd mi350x server tier for ROCm-based acceleration where appropriate.

Key hardware features include:

- 72 NVIDIA B200 GPUs for enhanced parallelism

- 36 Grace CPUs for robust computation

- Support for large-scale data processing tasks

This hardware setup is engineered to meet future computing needs. It supports scalability, allowing businesses to expand as technology evolves.

Advanced Liquid Cooling and Thermal Management

Efficient thermal management is crucial for high-performance systems. This solution employs advanced liquid cooling to maintain optimal temperatures. This method reduces overheating risks and improves system reliability.

Liquid cooling offers several benefits over traditional cooling methods. It provides quieter operation due to reduced fan usage. Additionally, it enhances component longevity by evenly distributing heat.

Key advantages of liquid cooling include:

- Improved heat dissipation

- Lower noise levels

- Enhanced component lifespan

This cooling technology ensures the system runs efficiently under heavy loads. It is particularly suited for data centers where performance and energy efficiency are paramount. By integrating this advanced thermal management system, businesses can achieve robust and reliable operations.

Storage, Memory, and Expansion Capabilities

In today's data-intensive world, storage and memory capabilities are pivotal. This rack-scale solution delivers versatile storage options, catering to diverse enterprise needs. It supports hot-swap drives, allowing quick replacements without downtime.

The system is equipped with scalable DDR5 memory, enhancing processing speeds. As a scalable ddr5 server, this capability is vital for demanding applications like AI and big data analytics. It ensures efficient data handling and rapid access to information.

Expansion capabilities are another highlight of this solution. It offers several options to accommodate future growth, including support for 10 nvme slots in adjacent tiers when paired with complementary storage nodes.

Key features include:

- Versatile hot-swap storage options

- Scalable DDR5 memory support

- Flexible expansion slots

Deployments can integrate 3u storage server or 4u enterprise storage systems providing 18 bay storage or configurations with 36 hot swap bays, as well as rackmount storage server appliances with 8 drive bays, depending on requirements. These configurations can also serve as a robust storage server layer for large datasets, including options like the nts elite vault 4u storage chassis with 38 hot-swap drive bays.

These features enable seamless integration into existing infrastructures. They provide the flexibility necessary for evolving business demands. Such capabilities ensure the system remains relevant and effective for years to come.

Performance for AI, HPC, and Data Center Workloads

This rack-scale solution is engineered to tackle the most demanding computational tasks. AI and HPC workloads require immense processing power, which is expertly delivered by the 72 NVIDIA B200 GPUs. These GPUs are optimized for parallel processing, ensuring efficient execution of complex algorithms.

The 36 Grace CPUs provide additional computational support, handling tasks that require swift processing speeds. These CPUs are designed to complement the GPUs, balancing the workload for optimum performance. Together, they create an environment suitable for data-heavy applications, ensuring consistent and rapid data throughput.

Moreover, this solution excels in data center applications. It supports robust performance levels while maintaining energy efficiency. It's ideal for environments that demand high compute densities coupled with low operational costs, serving as an ai hpc server and scalable performance server foundation for a data center gpu server footprint.

Performance highlights include:

- 72 NVIDIA B200 GPUs for unparalleled parallel processing

- 36 Grace CPUs enhancing computational power

- Optimized for energy-efficient data center operations

These features ensure that businesses can handle intensive AI, HPC, and data center workloads with ease. The system's architecture promotes efficient resource utilization, maximizing output without excessive energy consumption. This makes it a formidable tool for any enterprise aiming to excel in computing-intensive industries.

Integration, Scalability, and Future-Proofing

The liquid-cooled rack-scale solution integrates seamlessly with existing data center infrastructures. Its design ensures easy deployment without major changes to current setups. This compatibility reduces the complexity of implementation, saving time and resources.

The solution is built with scalability at its core. As businesses grow, they can easily expand their computing capabilities. This flexibility supports future-proofing, enabling organizations to adapt to technological advancements without complete overhauls. It functions as a scalable enterprise server backbone for mixed compute and storage tiers and is NVIDIA Blackwell compatible to ease future GPU upgrades.

In heterogeneous environments, it can sit alongside a 2u enterprise server or 4u server footprint, including supermicro 4u server and short depth server options at the edge. Teams can also incorporate enterprise ssd server storage, single cpu server nodes for utility tasks, and rackmount workstation or tower workstation server systems for development workflows.

Use Cases and Application Scenarios

This liquid-cooled rack-scale solution excels in several demanding environments. It is ideal for AI and high-performance computing (HPC) tasks. Its design supports high-density GPU configurations, making it perfect for machine learning models.

This solution serves diverse scenarios, including:

- Data analytics requiring intensive computing power

- Scientific research simulations demanding precision

- Enterprise applications needing robust storage and processing, which can be paired with a high capacity server or enterprise ssd server tier

With its versatility, the solution adapts to various enterprise needs. It enables businesses to handle complex workloads efficiently. By addressing specific use cases, it ensures enhanced performance and reliability across diverse applications.

Complementary platforms and form factors

For broader deployments, it pairs well with: nts elite fusion 6u liquid-cooled gpu server for ai & hpc workloads; nts elite apex 8u gpu server 8 nvidia a100 gpus for deep learning & ai workloads; nts elite apex 8u ai compute server 8-nvidia hgx b300 gpus; nvidia hgx b300 server; nts elite command 2u server with dual intel xeon and 16 drives; nts elite command 1u enterprise server with 5-drive support; nts elite command 2u mainstream server with single processor and 14 drive bays; nts elite studio 4u dual processor server 8 drive bays; nts elite vault storage 3u enterprise storage with 18 drive capacity; nts elite vault 4u 38-bay storage server with 36 hot-swap 3.5 bays + 2 rear 2.5 bays; 38 hot swap server; 14 bay server; 18 bay storage; 36 hot swap bays; 8 bay workstation; data center gpu server; rackmount storage server; hot swap storage server; 3u storage server; 4u enterprise storage; 4u server; 2u enterprise server; dual processor workstation; rackmount workstation; tower workstation server; dual amd epyc server; intel xeon server; single cpu server; high capacity server; scalable enterprise server; scalable performance server; scalable ddr5 server; 10 nvme slots; ai hpc server; nvidia blackwell compatible; liquid cooled ai server.

Conclusion: The Next Generation of High-Performance Compute

The liquid-cooled rack-scale solution represents a significant leap in high-performance computing. With 72 NVIDIA B200 GPUs and 36 Grace CPUs, it offers unmatched processing power and efficiency. This solution addresses the growing demands of AI and data center applications.

By incorporating advanced features like direct liquid cooling and high-density configurations, it sets a new standard for thermal management and resource optimization. Organizations seeking a high-capacity, scalable platform will find it indispensable. As businesses strive for innovation, this solution ensures they remain at the forefront of technology advancements. It is truly the next generation in computing solutions.