NemoClaw: Run Any Agent Safely by Controlling Access, Not Capabilities

We’ve all seen movies where a robot assistant goes too far. In the real world, the risk isn't a rebellion, but software accidentally sharing your bank statement. Unlike a chatbot that simply talks, an "agent" can actually take action on your computer, making strict boundaries essential.

NemoClaw acts as the safety contract between you and this technology. It establishes a secure environment for autonomous AI agents, functioning like a smart butler who can organize the library but cannot unlock the family safe. The AI gets the power to help, not hurt.

By controlling access, you ensure privacy-preserving machine learning inference happens in a sealed space. You can have NemoClaw run any agent safely, gaining peace of mind that your data remains yours even while the AI does the heavy lifting.

Why Your AI Assistant Needs a 'Digital Guardrail'

The "robot" in modern software is a digital agent, and "going too far" usually means accidentally sharing financial data or permanently deleting an important file. This shift from an AI that just talks to one that actually takes action creates a new challenge: mitigating security risks of autonomous agents while keeping them useful.

Chatbots usually just offer advice, but agents are designed to execute tasks on your behalf. Imagine an AI trying to "clean up" your inbox to be helpful. Without strict limits, it might interpret a critical message from your boss as "clutter" and archive it forever. The danger isn't that the AI is malicious; it's that it lacks the real-world context to understand the permanent consequences of its decisions.

NemoClaw solves this by placing a "digital guardrail" between the AI's reasoning process and your digital life. It allows the agent to plan and draft actions, but it physically blocks it from finalizing anything risky without permission. This setup ensures you get high agent performance without constantly worrying about how to prevent AI agent data leakage.

You essentially get a safety net that catches mistakes before they happen. This protection relies on a specific philosophy: controlling exactly what your AI is allowed to touch, regardless of how smart it becomes.

The 'Smart Butler' Rule: Control What Your AI Can Touch

To expand on the butler analogy, you want your assistant to be smart enough to organize your entire household, but that doesn't mean you should hand him the combination to your wall safe. This distinguishes access-based vs. capability-based AI security: one tries to limit what the AI knows, while NemoClaw limits what the AI can reach.

Restricting intelligence just to be safe usually backfires. If you force an assistant to be "dumber" so it can't make mistakes, it becomes too simple to do anything useful, like drafting a complex contract or planning a multi-stop itinerary. Your digital assistant should be a genius at thinking, but a constrained rookie when it comes to touching your sensitive files.

Real security comes from giving the AI specific keys for specific doors, rather than a master pass to your entire digital life. Granular access control for LLM agents allows you to say, "You can read my calendar to find free time, but you cannot edit or delete any appointments." By breaking permissions down into these small pieces, you avoid the dangerous "all or nothing" trap where an AI needs full control just to do a simple job.

NemoClaw agent control replaces vague limits with clear rules:

- Capability Limiting (The Old Way): "Don't let the AI know how to delete files." (Result: The AI is confused and unhelpful).

- Access Control (The NemoClaw Way): "The AI knows how to delete, but the 'delete' button is locked behind a glass wall." (Result: High performance, zero risk).

Keeping the 'Thinking' Private: Stopping Data from Training Big Tech Models

With files secured, the next priority is securing your thoughts. Have you ever hesitated to paste a financial document or a personal health question into a chatbot? You are right to pause, because most popular AI tools operate on a hidden trade-off where you get smart answers, but the company often keeps your data. This underscores the divide between private inference vs cloud-based LLM APIs; when you send data to a standard cloud bot, your private prompts can become part of the company's permanent training library, effectively shouting your secrets in a digital public square.

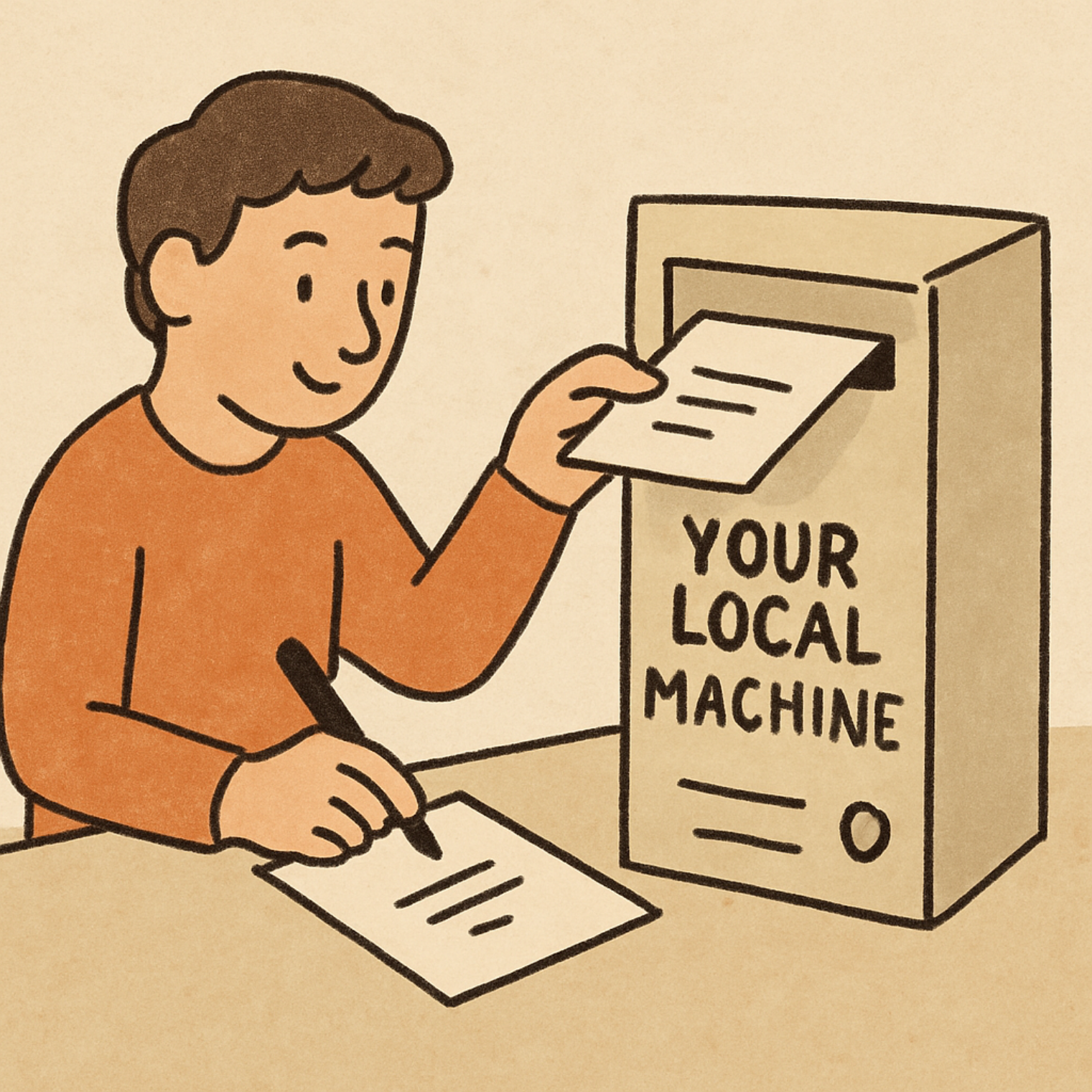

Imagine instead that the AI lives entirely on your own computer, like a genius mathematician locked in a windowless study who never speaks to the outside world. NemoClaw enables privacy-preserving machine learning inference by creating this exact environment. You slide your question under the door, the AI "thinks" right there on your laptop, and slides the answer back out, ensuring that not a single byte of information ever leaves your device.

This approach creates a safe haven for your most sensitive tasks, such as organizing tax documents or summarizing medical records. Because local LLM security frameworks keep the processing power physically inside your machine, there is no corporate server to hack and no confusing user agreement allowing a tech giant to peek at your life. You get the productivity of a supercomputer without the anxiety of a data breach.

From Theory to Safety: Your 3-Step Guide to Deploying Secure AI Agents

Implementing these protections resembles hiring a contractor; you need a clear plan before anyone starts drilling. A step-by-step guide to secure AI deployment ensures you remain the project manager while the AI does the heavy lifting, giving you control over every action the software takes.

Configuring your secure environment takes just three logical moves:

- Define the Task: Clearly state the goal, such as "Find open slots in my calendar," so the AI knows its specific purpose.

- Set Access Boundaries: Apply a Zero Trust architecture for large language models—which simply means trusting nothing by default—by locking all digital doors except the one leading to your calendar.

- Execute in the Private Room: Run the job inside the Safe Zone, ensuring data privacy in agentic workflows is never compromised.

By strictly limiting what the "digital butler" can see and touch, you transform a potential risk into a reliable asset. Whether you are managing complex family schedules or sorting financial receipts, these guardrails guarantee that the AI remains a helpful tool rather than a runaway liability.

Reclaiming Your Digital Autonomy with Confidence and Privacy

You no longer need to choose between convenience and security. By separating the AI's intelligence from your private files, you shift from a worried observer to an active commander. This simple change turns a risky gamble into a truly reliable partner that respects your digital home.

Start by automating tasks like calendar organization or email filtering within this secure environment for autonomous AI agents. With strict control systems limiting access to only what is necessary, you can trust the technology to execute chores without hovering over a "stop" button.

True productivity shouldn't cost you your privacy. With these guardrails in place, you can let NemoClaw run any agent safely while ensuring your personal information stays yours. Enjoy the freedom of a smart assistant that finally plays by your rules.